Artificial Intelligence, or AI, has been a buzzword for a while now, but few people know its true origins. The concept of machines emulating human intelligence has been around for centuries, and the technology has been developing rapidly over the past few decades. In this article, we will take a journey through time and discover the history of AI.

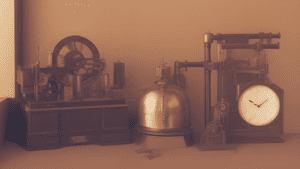

Ancient Times: Automata and Early Mechanical Devices

During ancient times, people had a fascination with creating machines that could perform tasks on their own. These early mechanical devices were often inspired by nature and the movements of animals. One of the most famous examples of these early machines is the Antikythera mechanism. Discovered in 1901 in a sunken ship off the coast of the Greek island of Antikythera, this device is thought to have been built around 200 BCE. It consisted of a complex system of gears and was used to predict the positions of the sun, moon, and planets, as well as lunar and solar eclipses.

The Antikythera mechanism was an incredible feat of engineering for its time and is often considered to be one of the first examples of a complex mechanical device. It was also a testament to the advanced knowledge of astronomy that existed in ancient Greece.

Other examples of ancient automata include the chessboard robot. This device was reportedly built in the 9th century and used a hidden human operator to move the pieces on the chessboard. The operator would sit inside the machine and use levers and pulleys to move the pieces, making it appear as though the machine was moving them on its own.

In addition to these early mechanical devices, there were also other types of automata that were created during ancient times. These included statues that could move and speak, as well as water clocks and other timekeeping devices.

Overall, the development of automata and early mechanical devices during ancient times was an important milestone in the history of technology. It paved the way for future innovations and helped to lay the foundation for the modern world we live in today.

Late 1700s – Early 1800s: The Industrial Revolution and Early Automata

The Industrial Revolution was a period of significant change that transformed the way goods were produced, and it had a profound impact on society. During this time, there were many advances in mechanical technology, which led to the development of early automata.

One of the most famous examples of early automata from this time is the Mechanical Turk. The Turk was a chess-playing automaton that was built in 1770 by Wolfgang von Kempelen, an engineer from Austria. The Turk was a life-size figure of a man sitting at a table, and it appeared to be capable of playing chess on its own, defeating many notable opponents throughout Europe and America.

However, the reality was that the Turk was not capable of playing chess on its own. Instead, it was operated by a human chess player who was hidden inside the machine. The player sat on a small platform inside the Turk and used a series of levers and pulleys to control the movements of the chess pieces on the board.

Despite the fact that the Mechanical Turk was not truly automated, it was an impressive feat of engineering for its time and became famous for its ability to defeat skilled chess players. It toured throughout Europe and America for over 80 years, attracting crowds of people who were amazed by its apparent ability to play chess on its own.

In addition to the Mechanical Turk, there were many other examples of early automata that were developed during the Industrial Revolution. These included machines that could perform simple tasks like weaving and spinning, as well as more complex devices like the Jacquard loom, which used punch cards to control the weaving of intricate patterns.

Overall, the Industrial Revolution was a critical period in the development of mechanical technology and automation. It laid the foundation for the modern era of manufacturing and set the stage for future advancements in automation and robotics.

1950s: The Birth of Artificial Intelligence

In 1956, John McCarthy, an American computer and cognitive scientist, coined the term “artificial intelligence” or “AI.” This marked the beginning of a new era in computing, where machines were no longer limited to performing basic arithmetic operations but were instead being developed to simulate human-like reasoning and decision-making.

At the time, computers were still in their infancy and were mainly used for scientific and military purposes. They were large, expensive, and required specialized knowledge to operate. However, McCarthy saw the potential for these machines to be used for more than just number-crunching.

In his proposal for the Dartmouth Conference, which was held in the summer of 1956, McCarthy outlined his vision for a machine that could reason and learn from past experiences. He envisioned a system that could simulate human intelligence by using a combination of logic, rules, and probability to make decisions.

This idea was revolutionary at the time, and it sparked a new wave of research and development in the field of AI. Over the next few decades, researchers made significant strides in developing algorithms and techniques that could simulate human-like intelligence.

One of the early breakthroughs in AI was the development of expert systems in the 1970s. These were programs that could replicate the decision-making abilities of human experts in specific domains such as medicine, finance, and engineering. Expert systems were widely used in industry, but they were limited in their ability to generalize to new situations.

In the 1980s and 1990s, there was a renewed focus on developing machine learning algorithms that could enable machines to learn from data and improve their performance over time. This led to the development of neural networks, which were inspired by the structure of the human brain.

Today, AI is a rapidly evolving field that is being used in a wide range of applications, from speech recognition and natural language processing to image and video analysis and autonomous vehicles. While the goal of creating machines that can match or surpass human intelligence is still far off, advances in AI are driving significant changes in industry, healthcare, and other fields, and the potential for future breakthroughs is immense.

1960s – 1970s: Rule-Based Expert Systems

In the 1960s and 1970s, rule-based expert systems were a significant area of research in the field of artificial intelligence. These systems were designed to solve complex problems by breaking them down into a set of rules that the computer could follow. The idea behind rule-based expert systems was to capture the knowledge and expertise of human experts and encode it into a set of rules that a computer could use to solve similar problems.

One of the earliest examples of a rule-based expert system was MYCIN, developed by Edward Shortliffe in 1976. MYCIN was a medical expert system designed to diagnose bacterial infections based on a set of symptoms and medical history. It was designed to replicate the decision-making process of a human expert, using a set of rules and heuristics to reach a diagnosis.

Another example of a rule-based expert system was DENDRAL, developed by Joshua Lederberg and his colleagues at Stanford University. DENDRAL was designed to help chemists identify the molecular structure of organic compounds based on their mass spectrometry data. It used a set of rules to generate hypotheses about the molecular structure and then used feedback from the user to refine and improve the accuracy of its predictions.

Rule-based expert systems were widely used in industry and government during the 1970s and 1980s. They were particularly useful in areas where there was a large amount of specialized knowledge that needed to be applied in a consistent and reliable manner. However, rule-based expert systems had some limitations, particularly when it came to dealing with uncertainty and ambiguity.

Despite their limitations, rule-based expert systems paved the way for further advances in the field of artificial intelligence. They demonstrated that it was possible to encode human expertise into a computer system and use it to solve complex problems. Today, the ideas and techniques behind rule-based expert systems continue to influence the development of more advanced AI systems, including machine learning algorithms and deep neural networks.

1969: The First AI Winter

In 1969, the US government cut funding for artificial intelligence (AI) research, marking the beginning of what is now known as the first AI winter. The term “AI winter” refers to a period of reduced funding and interest in AI research that occurred several times throughout the history of AI.

The first AI winter was caused by a combination of factors, including the lack of significant progress in AI research, the high cost of hardware and software needed for AI research, and the inability of AI researchers to demonstrate practical applications for their work. As a result, the US government, along with other organizations and institutions, began to reduce funding for AI research.

The first AI winter lasted from the late 1960s to the early 1970s and had a significant impact on the development of AI research. Many AI researchers were forced to abandon their work or move on to other areas of research, and funding for AI research remained low for several years.

The AI winter also had a profound impact on the perception of AI among the general public. Many people began to view AI as a pipe dream or a science fiction concept, rather than a realistic field of research with practical applications.

However, the first AI winter eventually came to an end, as new breakthroughs and innovations in AI research led to renewed interest and funding. In the 1980s, the development of expert systems and the rise of machine learning led to a resurgence of interest in AI research, which helped to drive significant progress in the field.

Today, AI is once again a rapidly growing field with significant investment and interest from governments, corporations, and individuals around the world. While the first AI winter was a challenging time for AI researchers and the field as a whole, it ultimately served as a reminder of the importance of perseverance and continued innovation in the pursuit of scientific advancement.

1980s – 1990s: Neural Networks and Machine Learning

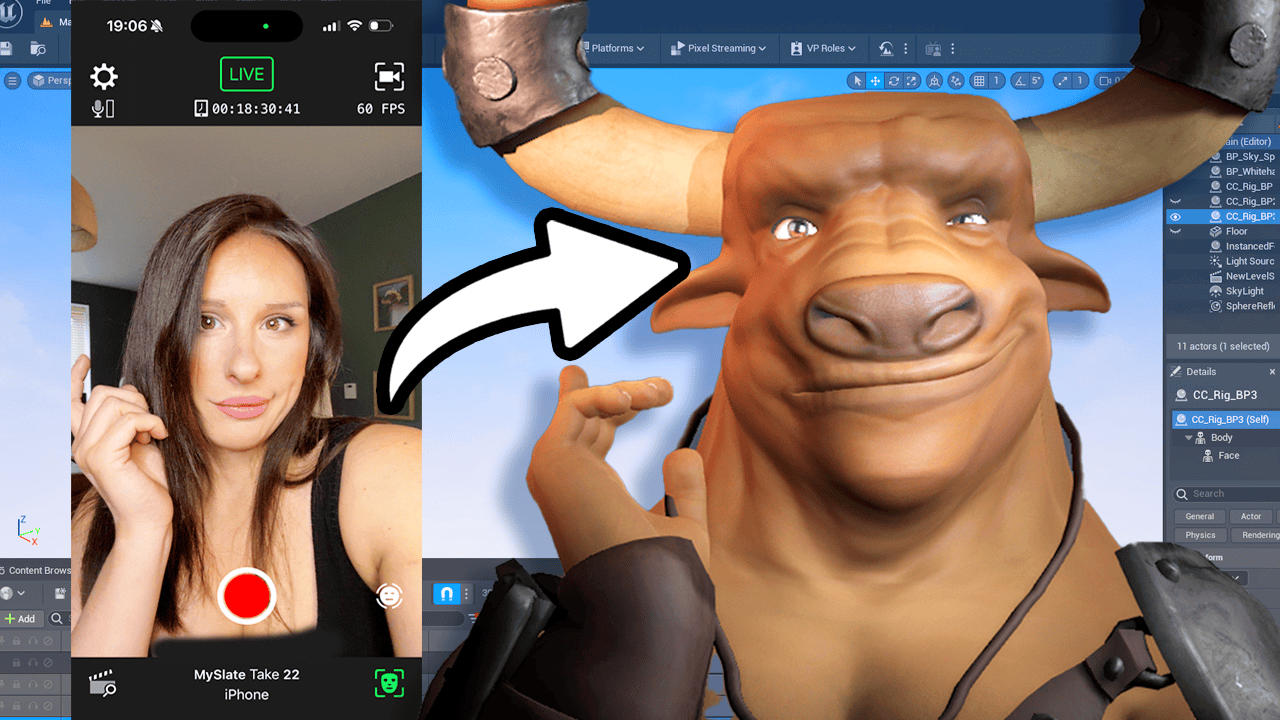

In the 1980s and 1990s, researchers began exploring the use of neural networks and machine learning techniques in the field of artificial intelligence. These technologies represented a significant departure from the earlier rule-based expert systems and offered new possibilities for creating intelligent machines that could learn and adapt over time.

Neural networks are computer systems that are modeled after the structure and function of the human brain. They consist of interconnected nodes or “neurons” that can learn and adapt based on new information. Neural networks can be used for a wide range of tasks, from image and speech recognition to natural language processing and decision-making.

Machine learning involves creating algorithms that can learn from data and make predictions or decisions based on that data. These algorithms can be used to classify data, detect patterns, and make predictions. One of the key benefits of machine learning is its ability to improve over time as it receives more data, making it an ideal technique for tasks like image and speech recognition.

The development of neural networks and machine learning techniques in the 1980s and 1990s led to significant advances in AI research. Researchers were able to develop sophisticated algorithms that could learn and adapt to new data, opening up new possibilities for creating intelligent machines.

One of the most significant applications of neural networks and machine learning in the 1990s was in the field of computer vision. Researchers developed algorithms that could analyze and recognize images, opening up new possibilities for applications like facial recognition, object recognition, and autonomous vehicles.

Today, neural networks and machine learning continue to be a major focus of AI research. The development of deep neural networks and other advanced machine learning techniques has led to significant breakthroughs in areas like natural language processing, speech recognition, and computer vision. As these technologies continue to evolve, we can expect to see even more significant transformations in the field of artificial intelligence.

1997: Deep Blue Defeats Kasparov

The chess match between Deep Blue and Garry Kasparov in 1997 was a major turning point in the field of AI. Deep Blue was a specially designed computer system created by IBM, designed specifically to play chess at a professional level. The match was held in New York City and attracted a lot of media attention.

The match was played over six games, with Kasparov winning the first game, but then losing the second and third games. The fourth game ended in a draw, and Kasparov won the fifth game, leaving the match tied at 2.5 games each. In the final game, Deep Blue emerged victorious, defeating Kasparov and winning the match by a score of 3.5 to 2.5.

The victory of Deep Blue over Kasparov was a significant achievement in the field of AI, as it demonstrated that machines could be developed to compete at a high level in complex games like chess. It also showed that machines were capable of analyzing and evaluating vast amounts of data in a short amount of time, far beyond what a human could do.

After the match, there was some controversy over whether or not Deep Blue’s victory was a true test of AI. Some argued that Deep Blue’s victory was due more to its brute computational power than to any real intelligence. Others argued that the machine’s ability to adapt and learn from past games made it a true example of AI.

Regardless of the debate, the match between Deep Blue and Kasparov was a pivotal moment in the history of AI. It showed that machines were capable of performing complex tasks that were once thought to be the sole domain of human intelligence. This breakthrough paved the way for further advances in the field of AI, including the development of machine learning algorithms and deep neural networks, which have led to even more significant breakthroughs in recent years.

2000s: Big Data and Deep Learning

In the 2000s, the advent of the internet and the explosion of data led to a renewed interest in artificial intelligence. Big data analytics became an essential part of AI research, with the ability to analyze vast amounts of data to find patterns and insights. Deep learning, a subset of machine learning, also emerged during this time and became an area of intense research and development.

Big data analytics involves the use of advanced algorithms and tools to analyze and make sense of large and complex data sets. The explosion of data in the 2000s, including social media, digital devices, and other sources, meant that big data analytics became increasingly important for businesses and organizations looking to gain insights and improve decision-making.

Deep learning, a subset of machine learning, involves the use of artificial neural networks with multiple layers. These networks are designed to learn from data and make predictions based on that data. Deep learning algorithms can be used for a wide range of applications, including image and speech recognition, natural language processing, and decision-making.

One of the most significant breakthroughs in deep learning came in 2012 when a deep neural network called AlexNet won the ImageNet Large Scale Visual Recognition Challenge, a competition for computer vision systems. AlexNet’s success demonstrated the potential of deep learning to revolutionize computer vision and image recognition, opening up new possibilities for applications like self-driving cars and facial recognition.

Overall, the 2000s saw significant progress in the development of AI, driven by the explosion of data and the emergence of big data analytics and deep learning. These technologies have had a significant impact on many industries, including healthcare, finance, and manufacturing, and have paved the way for further advances in AI research and development.

2010s – Present: AI Goes Mainstream

The 2010s saw a significant surge in the mainstream adoption of AI applications in various industries. This period marked the beginning of the fourth industrial revolution or Industry 4.0, which involved the convergence of technology, data, and physical systems.

One of the key drivers of this AI revolution was the growth of big data and cloud computing. The rise of the internet and digital technologies led to the collection of vast amounts of data, which could be used to train machine learning algorithms and develop sophisticated AI models. With cloud computing, businesses could access these resources on demand, without the need for significant upfront investment in hardware and software.

This period saw the emergence of virtual assistants like Siri and Alexa, which became ubiquitous in many households around the world. These assistants used natural language processing and machine learning algorithms to understand user queries and provide personalized responses.

The use of AI also expanded into various industries, including healthcare, finance, and manufacturing. In healthcare, AI is being used for early disease detection, personalized treatment recommendations, and drug discovery. In finance, AI is used for fraud detection, trading algorithms, and risk management. In manufacturing, AI is used for predictive maintenance, quality control, and supply chain optimization.

The development of self-driving cars also gained significant attention in this period, with major tech companies like Google, Tesla, and Uber investing heavily in autonomous vehicle technology. Self-driving cars use a combination of machine learning algorithms, computer vision, and sensor technologies to navigate and make decisions on the road.

Overall, the 2010s saw a massive expansion of AI applications in everyday life and across various industries. With continued advances in AI technology, we can expect to see even more significant transformations in the way we live and work in the coming years.

2011: Watson Wins Jeopardy!

In 2011, IBM’s Watson computer made history by winning a Jeopardy! match against two former champions, Ken Jennings and Brad Rutter. The match was broadcast on national television and attracted a lot of attention from the media and the public.

Watson was a highly advanced computer system designed by IBM to understand and respond to natural language clues. It was named after Thomas J. Watson, the founder of IBM. The system was built using a combination of advanced algorithms, machine learning, and natural language processing techniques.

The Jeopardy! match was a significant breakthrough in the field of natural language processing. Jeopardy! is a game show that involves answering questions in the form of answers, and the questions can be quite complex and require a deep understanding of language and culture. Watson’s ability to understand and respond to these questions in real-time was a major achievement for the field of natural language processing.

Watson’s success in the Jeopardy! match was due to its ability to analyze vast amounts of data and make connections between seemingly unrelated pieces of information. It used a combination of statistical analysis and natural language processing to understand the questions and generate responses.

The victory of Watson over human champions was a significant moment in the history of AI. It demonstrated that machines were capable of understanding and responding to natural language, a task that was once thought to be the exclusive domain of human intelligence. It also showed that machine learning algorithms and natural language processing techniques were becoming increasingly sophisticated and capable of performing complex tasks.

2016: AlphaGo Defeats Lee Sedol

In 2016, Google’s AlphaGo computer made history by defeating world champion Lee Sedol in a five-game match of the ancient Chinese game of Go. Go is considered one of the most complex games in the world, with more possible moves than there are atoms in the universe. AlphaGo’s victory was a significant achievement for the field of artificial intelligence and demonstrated the potential of deep learning and AI to solve complex problems.

AlphaGo was developed by DeepMind, a British AI research company acquired by Google in 2015. The system used a combination of deep neural networks and reinforcement learning to learn the game of Go and improve its gameplay over time. Reinforcement learning involves training a computer system by rewarding it for positive behavior and punishing it for negative behavior, allowing the system to learn from its mistakes and improve its performance.

The match between AlphaGo and Lee Sedol attracted a lot of attention from the media and the public, as it pitted human intelligence against artificial intelligence in a highly competitive and complex game. The victory of AlphaGo over Lee Sedol was a significant milestone in the development of AI, demonstrating the potential of AI to perform complex tasks that were once thought to be the exclusive domain of human intelligence.

AlphaGo’s success in the game of Go had significant implications for the future of AI research and development. It showed that deep learning and reinforcement learning techniques could be used to solve complex problems and learn new tasks, paving the way for further advances in AI technology. The victory of AlphaGo also sparked renewed interest and investment in AI research, leading to significant progress in areas like natural language processing, computer vision, and robotics.

Overall, the victory of AlphaGo over Lee Sedol was a significant moment in the history of artificial intelligence. It demonstrated the potential of deep learning and AI to solve complex problems and perform tasks that were once thought to be the exclusive domain of human intelligence. As AI technology continues to evolve, we can expect to see even more significant transformations in the way we live and work in the coming years.

2021: GPT-3 and Advanced Language Models

In 2021, OpenAI released GPT-3, a state-of-the-art natural language processing model that has been hailed as a breakthrough in AI research. GPT-3 stands for “Generative Pre-trained Transformer 3,” and it is the third iteration of a series of language models developed by OpenAI.

GPT-3 is a massive deep learning model that was trained on a vast amount of data from the internet, including books, articles, and websites. It has over 175 billion parameters, making it one of the largest and most complex language models ever created.

One of the most significant advances in GPT-3 is its ability to generate human-like text. It can write essays, stories, and even computer code with remarkable fluency and accuracy. GPT-3’s language generation capabilities have been used in a wide range of applications, from chatbots and virtual assistants to content creation and language translation.

GPT-3’s language generation capabilities are made possible by its deep learning architecture, which allows it to learn from large amounts of data and generate responses based on that learning. It also has the ability to understand context and generate responses that are appropriate to the situation.

GPT-3’s release has sparked a lot of excitement in the AI community, as it represents a significant step towards creating more advanced AI systems that can understand and interact with humans more effectively. It has the potential to revolutionize the way we interact with machines, making them more human-like and easier to use.

Final Thoughs

As we’ve seen, the history of AI is a long and fascinating one, filled with many breakthroughs and setbacks. From ancient automata to advanced deep learning models, AI has come a long way over the centuries. But where is it headed next? What new breakthroughs and innovations lie ahead?

As AI continues to evolve and develop, it raises many questions and challenges. Will machines eventually surpass human intelligence, and if so, what will that mean for our society? How can we ensure that AI is used ethically and responsibly? And what role will humans play in a world dominated by intelligent machines?

In the words of Stephen Hawking, “The rise of powerful AI will be either the best or the worst thing ever to happen to humanity. We do not yet know which.” But by continuing to push the boundaries of AI research and development, and by engaging in thoughtful and ethical discussions about its implications, we can work towards creating a future where AI is a force for good, and where humans and machines can coexist in harmony.