Style transfer is a machine learning task that involves blending two images—a content image and a style reference image—so that the output image looks like the content image, but “painted” in the style of the style reference image. Style transfer can be used for various purposes, such as creating artistic effects, enhancing photos or videos, or generating new content. In this guide, you will learn what style transfer is, how it works, and how you can use it for your own projects.

What is style transfer?

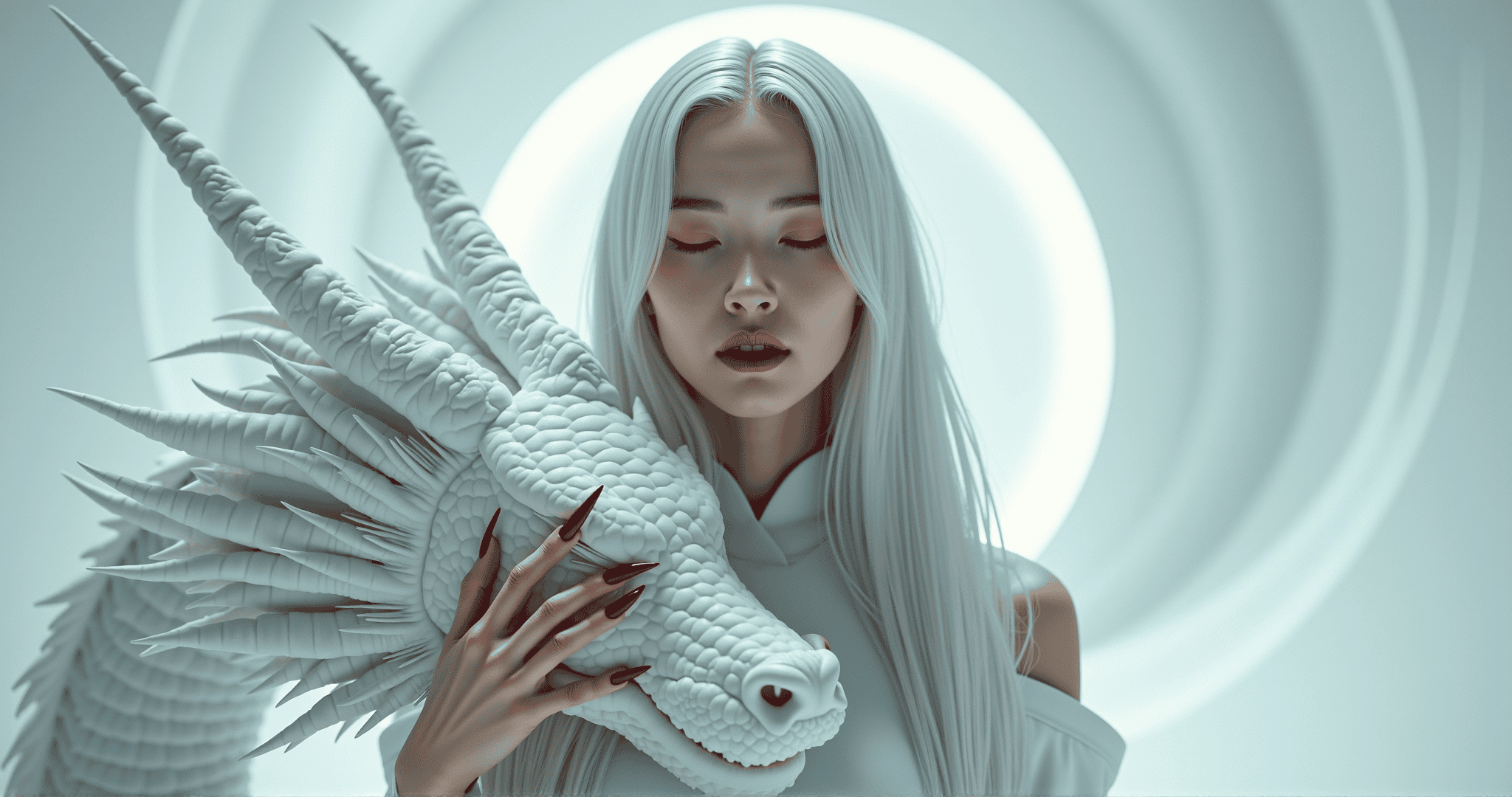

Style transfer is a form of image synthesis, where the goal is to transfer the style of a reference piece of art, such as a painting, to a target piece of art, such as a photograph, while preserving the content of the target piece. Style transfer can be seen as a form of image transformation, where the input image is complete and the output image is modified.

Style transfer can be applied to different types of images, such as natural scenes, faces, artworks, or text. Style transfer can also be conditioned on different types of information, such as masks, sketches, or text prompts. For example, style transfer can be used to apply the style of Van Gogh to a photograph of a city, to create a sketch from a photograph, or to generate an image based on a text description.

How does style transfer work?

Style transfer works by using a neural network, usually a convolutional neural network (CNN), to learn the features and representations of the image data and to generate a new image that matches the content and style of the input images. A CNN consists of multiple layers of filters that extract different levels of information from the image, such as edges, shapes, textures, and colors. The CNN can be trained on a large dataset of images to learn the general features of the image domain, or it can be trained on a specific pair of images to learn the specific features of the content and style images.

Style transfer works by defining two types of losses: a content loss and a style loss. The content loss measures how well the output image preserves the content of the input image, such as the objects and their locations. The style loss measures how well the output image matches the style of the reference image, such as the colors, textures, and patterns. The style loss can be computed at different layers of the CNN, to capture different levels of style information. The output image is then optimized to minimize the weighted sum of the content and style losses, while satisfying some constraints, such as the pixel range or the smoothness.

How can you use style transfer?

Style transfer is an open-source task that you can access and use for free. There are several ways to use style transfer, depending on your level of expertise and your needs.

If you want to try style transfer online, you can use the official website https://styletransfer.ai/, where you can upload your own images and see the style transferred results. You can also browse the gallery of images style transferred by other users and artists, and get inspired by their inputs and outputs.

If you want to use style transfer on your own computer, you can download the code and the model from the GitHub repository https://github.com/styletransfer/styletransfer. You will need to install some dependencies and follow the instructions to run the model locally. You can also modify the code and the model to suit your own needs and preferences.

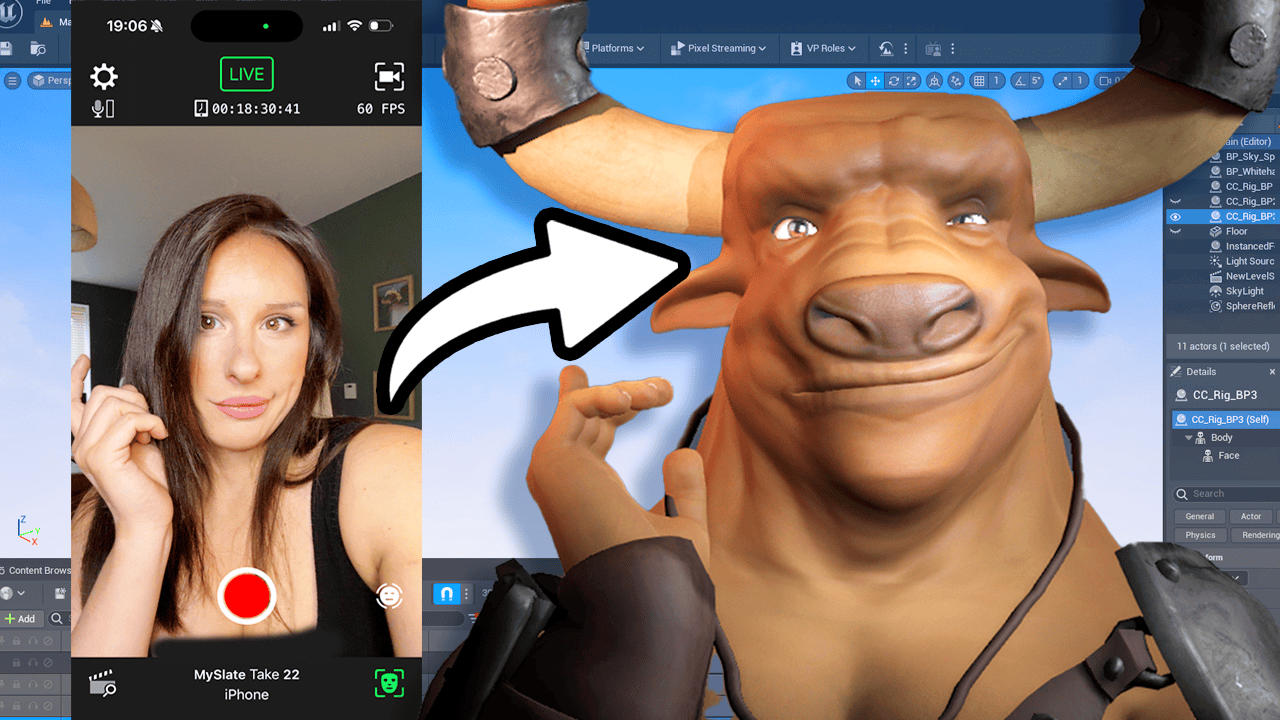

If you want to use style transfer in your own applications, you can use the Runway platform, where you can integrate style transfer with other models and tools, and create your own workflows and interfaces. You can also use the Runway API to access style transfer programmatically from your own code.

Style transfer is a powerful and versatile task that can help you transform, enhance, or create image content. Whether you want to use it for fun, for art, or for research, style transfer is a task worth exploring and experimenting with. Have fun and be creative with style transfer!