A little like when the lights finally turn on in the club at the end of the night, OpenAI’s new tool shines a light on who has been using GPT3 to churn out content, and how strongly it feels about this prediction.

OpenAI’s new Free Text classifier is an important step forward in monitoring the use of Artificial Intelligence (AI) generated content. The tool shines a light on who has been using GPT3 to create content and how confident OpenAI is that the prediction is accurate, by ranking it as either very unlikely, unlikely, unclear if it is, possibly, or likely AI-generated.

This classifier is the first active step the company is taking to ensure that AI-generated content is properly monitored and tracked.

Prior to the implementation of OpenAI’s Text classifier, there had been some discussion about watermarking AI-generated content, which would enable search engine crawlers to recognise and index the content. However, this new classifier goes a step further, giving the public an active role in tracking the use of GPT3-generated content.

OpenAI’s Text classifier is a welcome development for those who are concerned about the amount of content being generated by AI. By taking a proactive stance and monitoring the use of AI-generated content, OpenAI is making sure that these pieces of content are properly identified and regulated. ]

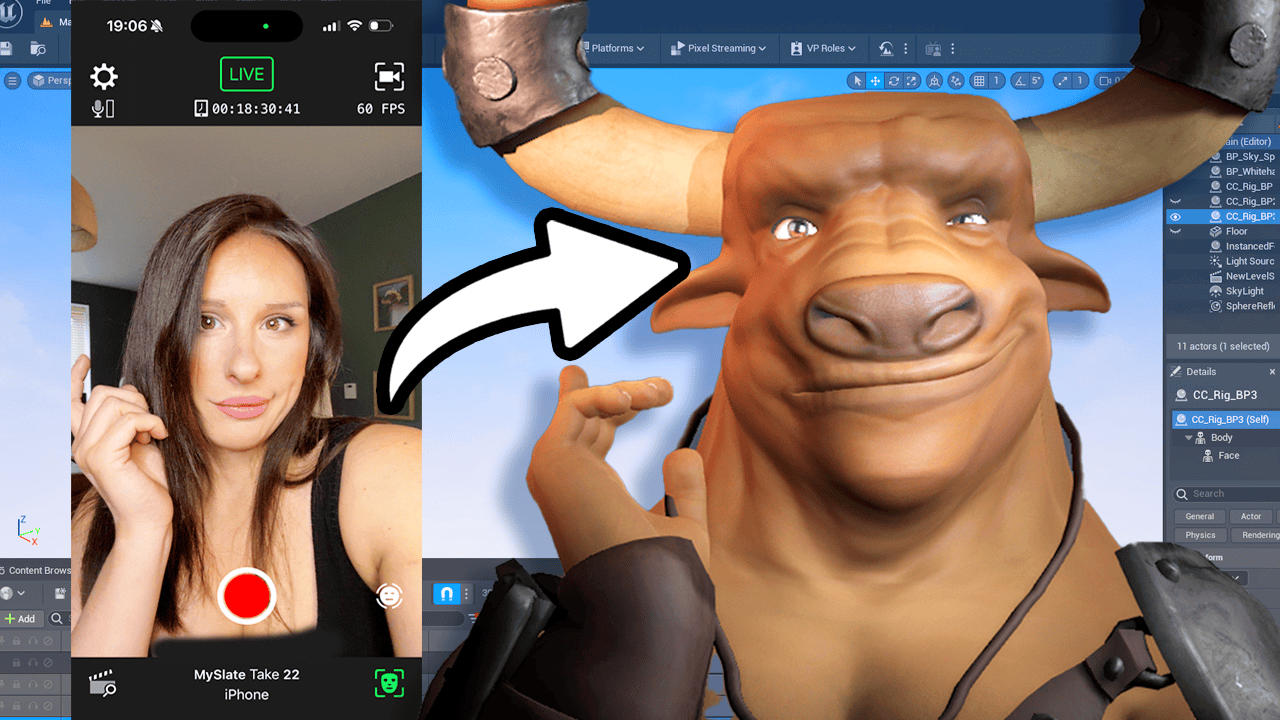

Testing the AI Classifier

Yesterday, OpenAI announced the release of its AI detector application, AI Classifier. Everyone jumped on the announcement to check their content and see if it was AI generated or not. Today, we’re testing it out and see how well it works.

One Click Writers Test

We’re going to use different one-click writers for the test: Text Builder, AutoBlogging Writer Kitb, OpenAI’s Playground and Chat GPT. To set the bar, we’ll start with Chat GPT and ask for a funny story about a dog of 1200 characters minimum.

Testing ChatGPT

The AI Classifier said it was possibly AI generated, even though the text literally came from itself. We tested the same prompt with the Playground and got the same result: possibly. Then we moved on to a different one-click writer, Keteb, and got an unlikely result. Autoblogging writer also got us an unlikely result. Lastly, when we tested the Textbuilder AI, we got the same result again: possibly. We tried using Writer but its character limit was too low. So in conclusion, we can’t really say that the AI Classifier works as expected. Even with its own generated texts, the result wasn’t that great. We have to wait and see how OpenAI will respond to this. Until then, take care of yourself and stay tuned for more news.

Testing with OpenAI’s Playground

The next step we took was testing the AI Classifier with OpenAI’s Playground. We asked it for a description of a beach and the result was possibly AI generated. We then tried writing some of our own text, asking for words that describe a beach. The AI detector said it was unlikely AI generated.

Testing Using Textbuilder AI

To make sure the results weren’t biased, we tested the AI classifier using Textbuilder AI. We asked it to summarize what the beach is like, and the AI classifier said it was possibly AI generated. We wanted to make sure we weren’t missing something, so we tried a few more times and got the same result each time.

Testing With Rytr

We moved onto testing the AI Classifier with Rytr, a word-processing platform. Unfortunately, its character limit was too low, meaning we couldn’t use it for the tests. We were hoping to get a sense of how the AI Classifier handles longer pieces of text, but this was not possible.

Testing With Autoblogging Writer

Our next test was Autblogging Writer ai. We asked it for an article about the history of the internet and the result was unlikely AI generated. We also asked it to describe the future of the internet and had the same result.

Testing With Chat GPT

Finally, we tested the AI Classifier with Chat GPT. We asked it to tell us a funny story about a dog and again, got the same result: possibly AI generated. We tested the same prompt a few times and each time, we got the same result.

Results Summary

We tested the AI Classifier using different one-click writers and it gave us mixed results. For most of the tests, we got the same result, which was that it was possibly AI generated. However, it gave us unlikely when we used Autblogging Writer Kitb. The only platform we couldn’t test was Writer because of its low character limit.

Conclusion

Overall, it appears that the AI Classifier is quite limited in its ability to detect AI-generated content. The results were mixed and some of the tests didn’t even work, such as Writer. Until OpenAI can come up with a more reliable way to detect AI-generated content, the AI Classifier won’t be of much use. It looks like, at this point, the best way to go is to just manually check the content you produce.

FAQ

Q: What is the new AI classifier?

A: The new AI classifier is a tool trained to distinguish between text written by a human and text written by AIs from a variety of providers. It was developed to inform mitigations for false claims that AI-generated text was written by a human, such as running automated misinformation campaigns, using AI tools for academic dishonesty, and positioning an AI chatbot as a human.

Q: How reliable is the classifier?

A: The classifier is not fully reliable. In their evaluations on a challenge set of English texts, their classifier correctly identifies 26% of AI-written text (true positives) as “likely AI-written,” while incorrectly labeling human-written text as AI-written 9% of the time (false positives). Its reliability typically improves as the length of the input text increases.

Q: What are the limitations of the classifier?

A: Their classifier has a number of important limitations. It should not be used as a primary decision-making tool, but instead as a complement to other methods of determining the source of a piece of text. It is very unreliable on short texts (below 1,000 characters). Also, sometimes human-written text will be incorrectly but confidently labeled as AI-written by their classifier. They recommend using the classifier only for English text; it performs significantly worse in other languages and it is unreliable on code. Text that is very predictable cannot be reliably identified. Additionally, AI-written text can be edited to evade the classifier.