Stack Overflow has recently made headlines for banning code written by ChatGPT. But why did they take this drastic measure? In this blog post, we’ll explore the reasons why Stack Overflow took such a hard stance against code generated by a machine learning system, and what the implications could be for developers who rely on these tools.

Introduction to ChatGPT

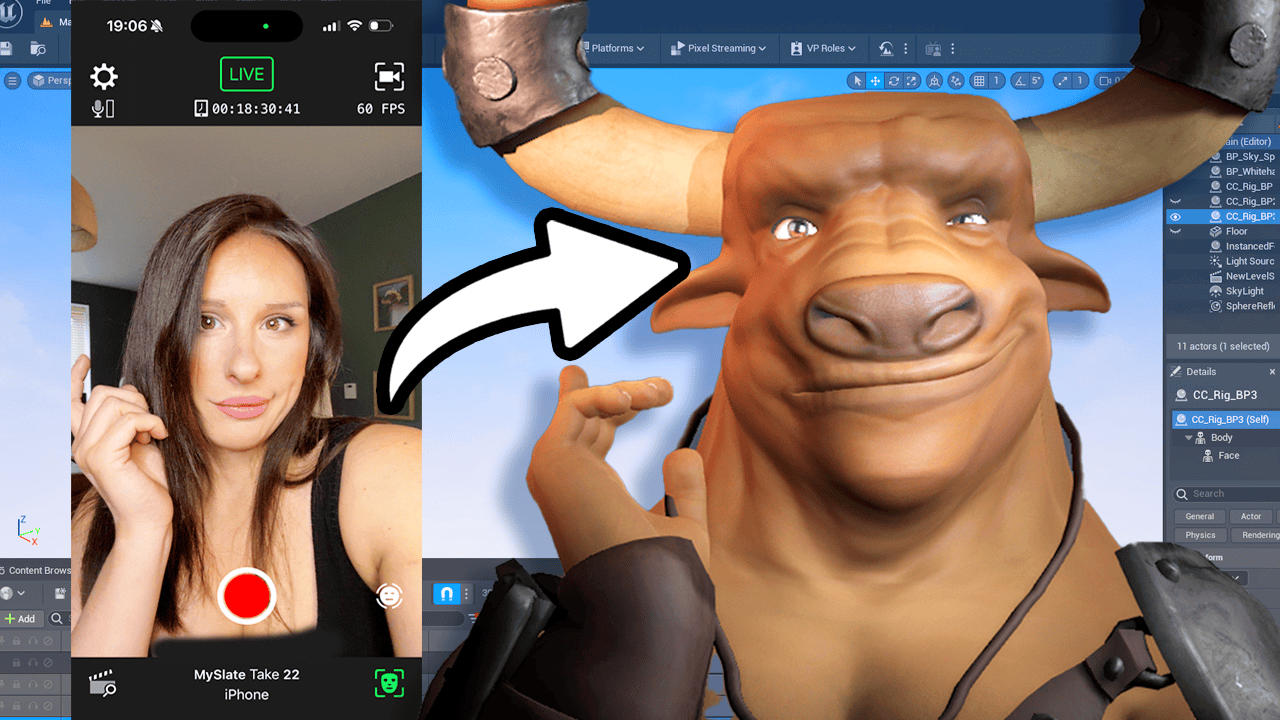

ChatGPT is an AI chatbot created by OpenAI that can generate answers to coding queries. It has been able to produce answers that have the appearance of being well-written, but lack explanations or are poorly written. Stack Overflow, a popular programming forum, has temporarily banned all answers created by ChatGPT due to the inaccuracy of the results. The site’s moderators argue that these AI-generated responses are “substantially harmful” both to the site and its users, who rely on accurate information when seeking help with their coding problems. Stack Overflow has not yet made a permanent decision on how it will handle code generated by ChatGPT but is currently withholding judgment until further discussion among staff members.

The decision is a preventative measure until Stack Overflow staff have had time for a larger discussion about the implications of using AI-generated answers. Until then, any user found misusing content from ChatGPT could face sanctions from Stack Overflow.

This temporary ban is just one example of how StackOverflow is working to ensure its platform remains a safe and reliable source of programming knowledge and expertise.

What is Stack Overflow?

Stack Overflow is an online community of developers and technology experts who come together to ask questions, share their knowledge and help each other solve coding problems. It is the go-to destination for programmers looking for solutions to coding problems or advice on best practices. Stack Overflow was founded in 2008 and has since become one of the largest online communities for developers, with over 13 million unique visitors per month from around the world. It offers a variety of features such as Q&A forums, code snippets, tutorials, and more to help its users learn new technologies and find solutions to their coding challenges.

Problems with Automated Programming Tools

Automated programming tools have become increasingly popular in recent years as a way of making coding and software development easier and faster. But while these tools can provide some advantages, there are also many potential problems with using them. The most common issues include code that is difficult to read or maintain, bugs that are hard to debug, and security risks due to lack of understanding of the underlying code. Additionally, automated programming tools can’t always take into account all the finer details of a project, so users may find themselves spending more time fixing mistakes than they would if they had coded the project by hand. As such, it is important for developers to understand the potential pitfalls of relying too heavily on automated programming tools before taking on any coding task.

Lack of Quality Control in Automated Tools

Automated tools are becoming increasingly popular for completing tasks that were once done by humans. However, as these tools become more widely used, the need for quality control becomes even greater. Without proper oversight, automated tools can produce results that are inaccurate or unreliable. This can lead to significant problems and mistakes if not addressed quickly and properly. Lack of quality control in automated tools is a major concern as it can lead to serious mistakes with potentially disastrous consequences.

Quality control is important in order to ensure that tasks are completed accurately and efficiently. It involves developing a system of checks and balances to make sure that any automated tool is working properly and producing accurate results. This includes testing the accuracy of the tool itself, as well as assessing the data it produces and ensuring it meets certain standards of reliability. Without quality control measures in place, automated tools may be prone to producing inaccurate or misleading results which could have serious implications on decisions made based on this data.

It is essential for any organization using automated tools to put in place effective quality control processes before deploying them into production environments. Doing so will help prevent any incorrect outcomes from occurring due to faulty or outdated algorithms or data sets being used by the tool. Quality assurance teams should also be established in order to monitor these systems closely and identify any areas where improvement may be needed before going live with an automation solution.

By implementing rigorous standards of quality assurance into their automated solutions, organizations can ensure they are getting reliable output from their AI-driven systems while minimizing potential risks associated with lack of quality control oversight over their AI-driven technology investments.

Impact on Human Programmers

The introduction of ChatGPT has raised fears among many developers that it will make their jobs obsolete. However, Stack Overflow’s ban on ChatGPT proves that this is far from being the case. Human coders are still essential for creating accurate and reliable solutions as well as providing context to complex programming problems. The decision also serves as a reminder that programming is an ever-evolving field in which machines still have a long way to go before they can match human intelligence and expertise.

Overall, Stack Overflow’s ban on ChatGPT is a welcome move that reassures human programmers of their importance in the industry. It also sets an example of how tech companies should responsibly manage AI technology so as not to undermine its potential while preserving the livelihoods of human workers in tech fields.

Potential for Abuse of Automation Tools

Automation tools, like OpenAI ChatGPT, can be incredibly useful when it comes to writing code. However, these AI-generated answers may not always be accurate or relevant, and could potentially cause confusion or mislead users who are looking for reliable solutions. To prevent this from happening, Stack Overflow has taken the precautionary measure of temporarily banning users from sharing answers to coding queries generated by AI chatbot ChatGPT.

OpenAI s natural language processing system can generate syntactically correct code that compiles meaning that it looks correct – but could have subtle bugs in it. As such, allowing these answers to remain on the platform could lead to developers using faulty code and the spread of misinformation, something Stack Overflow does not want to happen.

Stack Overflow is known for providing a reliable source of help for developers and software engineers, so it is understandable why they have chosen to take this step. The platform wants to ensure that users get reliable solutions when they ask questions related to coding on their site. This is why they have decided to temporarily ban AI-generated answers until further notice.

Security Vulnerabilities in Automated Code

The use of automated code in development projects has become increasingly common as AI chatbot technology improves. However, this development also brings with it new security risks that must be addressed. Automated code can contain errors or vulnerabilities that could lead to data breaches, malicious activity, or other forms of exploitation. As a result, it is important for developers to take steps to ensure their code is secure before deploying it into production environments.

One of the primary concerns when using automated code is the potential for introducing security vulnerabilities into the codebase. AI chatbots are often programmed with specific rules and parameters in order to generate code that meets certain criteria, but these rules may be incomplete or not fully understood by developers. As a result, these systems may produce code with unintended consequences that can create serious security risks if deployed without proper testing and verification.

For example, an AI chatbot may generate software code that contains an SQL injection vulnerability due to the lack of input validation when reading user input from databases or web applications. If this vulnerability is exploited by malicious actors, they could gain access to sensitive data or take control of other parts of the system. Similarly, if an AI chatbot produces software code with a cross-site scripting (XSS) vulnerability, attackers could inject malicious scripts into vulnerable web applications and potentially compromise user accounts and data stored on them.

To reduce the risk of security vulnerabilities in automated software development projects, developers should always thoroughly review any generated code before deploying it into production environments and consider implementing additional layers of protection such as static source analysis tools and fuzzing tests to identify potential issues before they become problems. Additionally, developers should also ensure their systems are updated regularly with the latest security patches and harden

Unintended Consequences of Using ChatGPT

Aside from inaccuracy issues, using ChatGPT could also make it difficult for developers to identify the source of their answer and give credit where it is due. Furthermore, it could lead to an erosion of trust in Stack Overflow as users may no longer be able to rely on its community-sourced answers and solutions.

In order to prevent these unintended consequences, Stack Overflow has temporarily banned all answers generated by ChatGPT until further discussion can be had about its use on the platform. This move shows that Stack Overflow is serious about ensuring its users get accurate and reliable coding solutions from its platform and will continue making sure that happens in the future.

Open Source Alternatives to ChatGPT

Open source alternatives to ChatGPT exist that can help programmers and developers answer coding questions quickly and accurately. These alternatives are often more reliable than ChatGPT due to their open source nature, which allows developers to inspect the code and make necessary changes or improvements as needed. Open source chatbots also benefit from a larger community of contributors who can help develop the tool further, while also providing additional support when necessary. Popular open source alternatives to ChatGPT include Botkit, Rasa, Dialogflow, Wit.ai, Microsoft Bot Framework, and Amazon Lex. Each of these chatbot frameworks offer unique features and capabilities that allow developers to create powerful chatbots with ease.

TD/LR

In summary, Stack Overflow has temporarily banned the use of AI-generated answers from ChatGPT. This decision was made due to concerns that users may be misusing the content generated by the bot and getting inaccurate results. The site is withholding a permanent decision on AI-generated answers until after further discussion amongst staff members. However, this ban highlights the risks posed by relying too heavily on automated systems for coding-related queries, as these responses can often lack accuracy and be more tedious than writing code manually.