Are you curious about GPT-3, the latest machine learning breakthrough? If so, then this blog post is just what you need! We ll cover the basics of GPT-3 and how it works, as well as discuss its potential uses and limitations. Read on to learn more about how GPT-3 is revolutionizing AI technology!

Introduction to GPT-3

GPT-3 is an autoregressive language model developed by OpenAI. It uses deep learning to generate human-like text from any given input. GPT-3 stands for Generative Pre-trained Transformer and it is the third iteration of OpenAI’s language model.

GPT-3 was trained using almost all available data from the internet and has shown amazing performance in various natural language processing tasks. With over 175 billion parameters, GPT-3 is one of the most versatile and powerful language models ever created.

OpenAI released GPT-3 in June 2020 and since then, it has been used for a variety of applications such as conversational AI, machine translation, summarization, question answering etc. It can take any input text as input and transform it into a high quality output that looks like it was written by a human being.

Overall, GPT-3 is an advanced language model with great potential for use in many different applications that require natural language processing capabilities. Its ability to generate human like content makes it an invaluable tool for businesses seeking to automate certain tasks or create more engaging content for their customers.

What is GPT-3?

The GPT3 model was trained with almost all available data from the Internet and has shown amazing performance in various natural language processing (NLP) tasks such as text classification, machine translation and question answering. Unlike other models, GPT-3 does not require any special domain knowledge it can complete domain-specific tasks such as summarizing complex documents or writing creative stories using only general knowledge.

GPT-3 is an exciting development for AI and natural language processing applications. While it will take time for developers to learn how to use the model effectively, its potential applications are immense and will revolutionize many aspects of our lives in the near future!

How Does GPT-3 Work?

By leveraging vast amounts of data and powerful algorithms, GPT-3 has become one of the most advanced natural language processing systems available today. It has allowed companies to empower their existing products with AI capabilities and create entirely new ones as well.

GPT-3 demonstrates that large language models trained on enough data can solve NLP tasks they have never encountered before without any additional training or fine tuning required. This makes GPT-3 an incredibly useful tool for businesses looking to quickly

Applications of GPT-3

GPT-3 has numerous applications in the world of artificial intelligence (AI). For example, it can be used to generate text from scratch based on user inputs, develop chatbots that are able to understand natural language queries and provide accurate responses, build AI assistants like Alexa or Siri that are capable of recognizing human speech and responding appropriately, as well as for summarization tasks such as creating abstracts for academic papers or news articles. In addition, GPT-3 can be utilized to enhance machine translation services by providing more accurate translations without requiring manual intervention.

Given its potential applications, GPT-3 is seen by many as a gamechanging tool in the field of artificial intelligence development. By leveraging its deep learning capabilities and

Natural Language Processing (NLP)

Natural Language Processing (NLP) is a branch of Artificial Intelligence that deals with the analysis and understanding of human language. It enables machines to read, interpret, and understand natural language so they can process it like humans do. NLP technologies are used in various applications such as automatic summarization, sentiment analysis, text classification, text clustering, question answering systems, natural language generation, machine translation, dialogue systems and more.

NLP algorithms rely on techniques from linguistics and computer science to analyze text. They use techniques such as tokenization (splitting sentences into words), part of speech tagging (identifying nouns, verbs etc.), parsing (analyzing syntax), lemmatization (normalizing words based on their root forms) and semantic analysis to understand the meaning behind the words.

At its core NLP is about understanding how humans interact with each other using language. By leveraging advances in machine learning technology it has become possible to build Natural Language Processing systems that can understand natural language with very high accuracy. This is why NLP is becoming increasingly important for fields such as automated customer service or conversational bots that need to respond accurately to user input.

Text Generation and Summarization

Text generation and summarization are two powerful natural language processing (NLP) tasks used to analyze and generate text. Text generation is the process of creating meaningful texts from given data, while summarization is the task of creating concise summaries from large blocks of text. Generative Pre-trained Transformer 3 (GPT-3) is a deep learning model that has revolutionized these NLP tasks. It produces human-like text and works relatively well for extractive summarization identifying the sentence which best summarizes an entire text. GPT-3 can also be used for applications requiring deep understanding of content such as question answering, machine translation, and creative content generation. It has been proven to be equally effective on a variety of NLP tasks, making it an extremely useful tool in modern day language processing.

Automated Knowledge Generation

Automated Knowledge Generation is a field of Artificial Intelligence (AI) that focuses on generating new knowledge through the use of AI algorithms. It has been gaining popularity in recent years due to its ability to process large amounts of data and uncover insights that would otherwise be difficult or impossible for humans to identify. Automated Knowledge Generation can be used in a variety of applications, including natural language processing, image recognition, and predictive analytics.

At its core, Automated Knowledge Generation involves the use of algorithms to analyze data and generate insights from it. These insights can be used for various tasks such as making predictions about future events or providing recommendations for decisions based on past information. This type of AI technology has been widely adopted by businesses as it helps them gain better understanding about their customers and markets leading to improved decision making.

In addition to businesses, Automated Knowledge Generation is also being used by researchers and scientists for various research projects. For example, it can be used to identify patterns in large datasets which may help researchers discover new insights or uncover relationships between different variables that might not have been previously known. Additionally, this technology can also be used to automate routine tasks such as document analysis or summarization which would otherwise require significant manual effort.

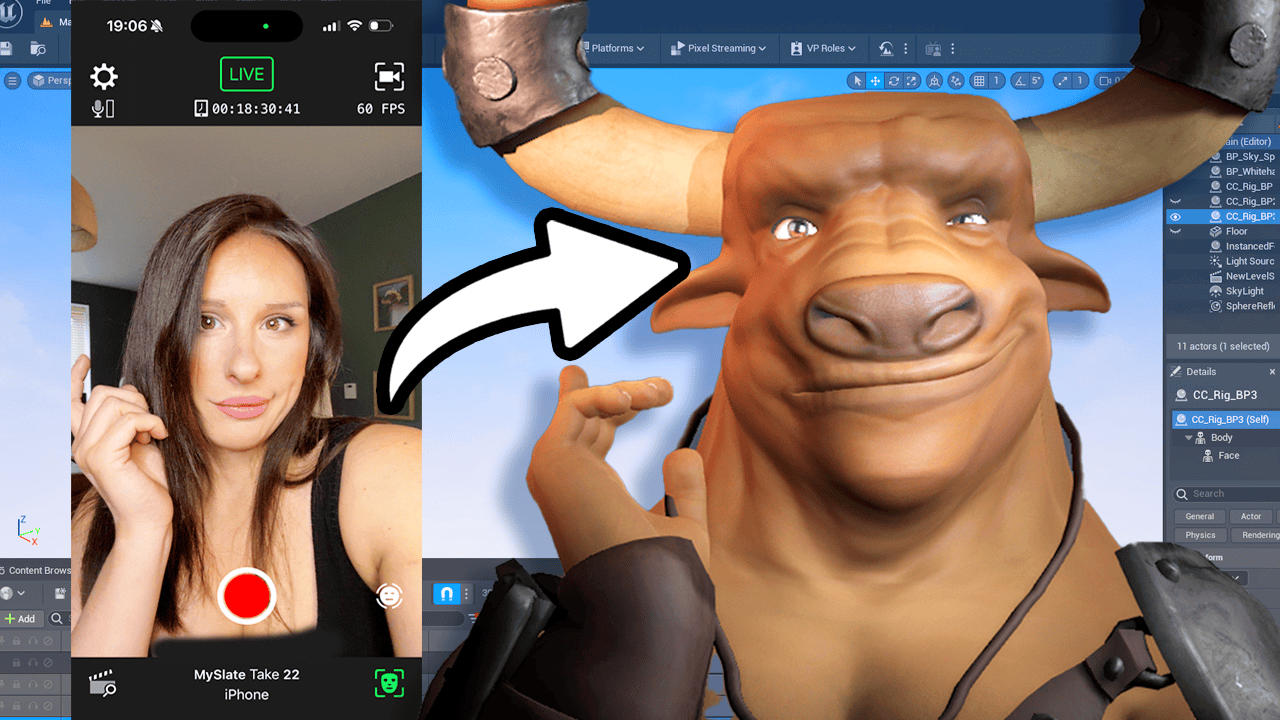

Dialogue Systems and Chatbot Development

Dialogue systems and chatbots are becoming increasingly popular as a way to interact with users in a natural, conversational manner. Dialogue systems allow users to have conversations with bots in order to get answers to their questions or carry out certain tasks. Chatbots are software applications that use natural language processing (NLP) and artificial intelligence (AI) technologies to understand user input and respond in an intelligent way.

The most advanced dialogue system is the GPT-3 (Generative Pre-trained Transformer 3) API which is developed by Open AI. It uses deep learning algorithms and massive datasets of information from the internet, enabling it to generate output text quickly and accurately. This makes it suitable for creating chatbot solutions that can hold natural conversations with users. The GPT-3 model has been used for a variety of tasks including article writing, poetry, stories, news reports, and dialogues.

Using GPT-3 for chatbot development allows developers to create highly humanlike conversational agents that can interact with users on multiple topics at once. For example, Rohrer Labs created Samantha AI a GPT-3 powered assistant which was designed to act like a real person when answering questions or providing advice on topics such as health

Question Answering Systems

GPT-3 can be used for a wide range of applications including customer service agents, virtual assistants, medical diagnosis assistants, automated essay grading systems, and more. It also has potential applications in robotics research such as autonomous navigation and object recognition.

In addition to providing accurate answers quickly, Question Answering Systems can also help reduce the cost of customer service by automating mundane tasks such as answering simple FAQs.

Image Captioning

Image captioning is the process of using a computer to generate a description of an image. It involves combining natural language processing and computer vision algorithms to generate captions for photographs, videos, and other visual media. The goal is to create captions that accurately describe the contents of the image, making them easier for humans to understand.

In recent years, advances in machine learning have enabled computers to generate more accurate descriptions than ever before. This has led to increased interest in using image captioning technology for various applications, such as creating searchable databases of images and videos or providing visually impaired people with more accessible information about their surroundings.

To create an image caption, a computer needs access to several different types of data. First, it must be able to recognize the objects within an image. Then it needs data on how those objects interact with one another in order to create a meaningful description of what s happening in the scene. Finally, it requires access to natural language processing algorithms so that it can turn this visual data into readable text descriptions.

Once these components are combined together, computers are able to generate accurate descriptions from images and videos at speeds far faster than humans could ever achieve. This technology can be

Natural Language Understanding (NLU)

Natural Language Understanding (NLU) is a field of Artificial Intelligence that enables machines to understand human language in the form of text or voice data. It enables computers to accurately process natural language, including all its nuances and complexities, and produce meaningful output. NLU uses Natural Language Processing (NLP) techniques for text analysis, as well as Machine Learning algorithms that identify patterns in textual data.

NLU has a wide range of applications, from chatbots to search engines and automated customer support systems. In addition, it allows businesses to gain insights from customer feedback and unstructured data by understanding the context and sentiment of customers queries. Moreover, NLU can be used in many fields such as healthcare, education and finance.

Recently released Generative Pre-trained Transformer 3 (GPT-3), developed by OpenAI, is the most powerful autonomous language model ever created with over 175 billion parameters trained on 45TB of text data collected from web sources such as Common Crawl and Reddit. GPT-3 is capable of understanding language more like humans do by recognizing patterns in unstructured data.

In summary, Natural Language Understanding (NLU) is an essential component of Artificial Intelligence that enables

Advantages of Using GPT-3

One major advantage of GPT-3 is its size; it is the largest language model within the neural network with access to 0.6% of the entire English Wikipedia. This means that GPT-3 can process vast amounts of data and generate highly realistic results with minimal effort or training time required.

In addition to its large size, GPT-3 also has a wide range of applications in various fields due to its ability to answer questions, write essays, summarize long documents and even create code for machine learning frameworks such as TensorFlow or Pytorch. This makes it an incredibly versatile tool for both developers and non-developers alike.

Finally, GPT-3 can be used at any scale from small projects all the way up

Limitations of Using GPT-3

GPT-3 is a powerful language model developed by OpenAI which has the ability to generate written text. While GPT-3 has some impressive capabilities, it is important to understand its limitations before using it in any application.

The biggest limitation of GPT-3 is that it does not always produce accurate outputs due to the limited datasets used for training. As GPT-3 was trained with data from the internet, it can be prone to generating factual errors or incorrect conclusions based on incomplete information. Additionally, GPT-3 is only able to generate text when given an input; it cannot independently create content without any guidance.

Another issue with GPT-3 is that its applications are limited to natural language processing tasks such as summarization, sentiment analysis and question answering. It cannot be used for complex tasks like object recognition or natural language understanding which require more sophisticated models and datasets.

Finally, there are ethical concerns about using GPT-3 since it can potentially be misused for malicious purposes such as creating fake news or misleading information. Therefore, careful consideration must be taken when deciding whether or not to use this technology in an application.

In conclusion, while GPT-3

Conclusion

GPT-3 can create large amounts of unique content without human intervention, allowing it to be used for a variety of tasks such as natural language processing (NLP), text generation, writing essays, summarizing documents, and more. GPT-3 has been praised for its ability to generate high quality content which is leaps and bounds ahead of what we had before.

1 Comment

Pingback: What exactly is GPT3 ai? - PROMPT MUSE